👋 Hey, it’s Stephane. I share lessons, and stories from my journey to help you lead with confidence as an Engineering Manager. To accelerate your growth see: AI Interview Coach | 50 Notion Templates | The EM’s Field Guide | CodeCrafters | Get Hired as an EM | 1:1 Coaching

Paid subscribers get 50 Notion Templates, The EM’s Field Guide, and access to the complete archive. Subscribe now.

Nobody gets rejected from an Engineering Manager role because they couldn’t invert a binary tree. They get rejected because when someone asked “Tell me about a time you influenced a technical decision across teams” they rambled for four minutes and never got to the point.

I’ve been on both sides of this. As a hiring manager, I’ve watched brilliant engineers give behavioural answers so vague they could apply to literally any job. As someone who’s interviewed for leadership roles myself, I’ve felt the particular panic of knowing you’ve done the work but not being able to find the words for it during the interview.

The technical rounds get all the prep. But it’s often the behavioural interviews that lead to rejections - and almost nobody prepares for them properly.

The preparation

Think about how engineers prepare for technical interviews. They grind leetcode for weeks. They do system design practice with friends. They buy courses, read books, time themselves solving problems. The preparation infrastructure is enormous.

Now think about how those same engineers or engineering managers prepare for behavioural interviews. They Google “common behavioural interview questions” the night before. They read the list, think “yeah, I could answer those” and go to bed. Maybe they skim the company’s leadership principles on the morning of.

That asymmetry would be fine if behavioural rounds didn’t matter much. But at the senior, staff, and management levels, they often matter more than the technical rounds. At Amazon, your leadership principles performance can tank an otherwise strong packet. At Google, the hiring committee weighs “Googleyness and leadership” alongside coding. At Meta, the behavioural bar for M1 and above is explicit and high.

You can ace every technical screen and still get a “no hire” because your behavioural answers were bad. (This is the problem I built my AI interview coach to solve - more on that below.)

Why this round is so hard

Coding is explicit. You write a function, it works or it doesn’t, and the logic is visible on screen. Leadership is the opposite. Your best work is often the stuff nobody saw, the conflict you defused before it reached the team, the reorg you navigated without losing anyone, the conversation with a product manager that prevented a three-month detour.

None of that feels like a “story”. It feels like Tuesday. So when an interviewer asks “tell me about a time you dealt with a difficult stakeholder” experienced engineers skip over the genuinely impressive thing they did and reach for something more dramatic that they can’t explain as well.

There’s a cultural problem too. Engineering culture rewards “let the work speak for itself”. Which is fine for the day job, but it’s disastrous in an interview where you’re literally being asked to speak for your work. Engineers who resolved serious team conflicts describe their role as “I just facilitated a conversation”. Engineers who changed how their entire org approached technical debt say “it wasn’t really a big deal”. The instinct to minimise is strong, and it’s the opposite of what a behavioural interview rewards.

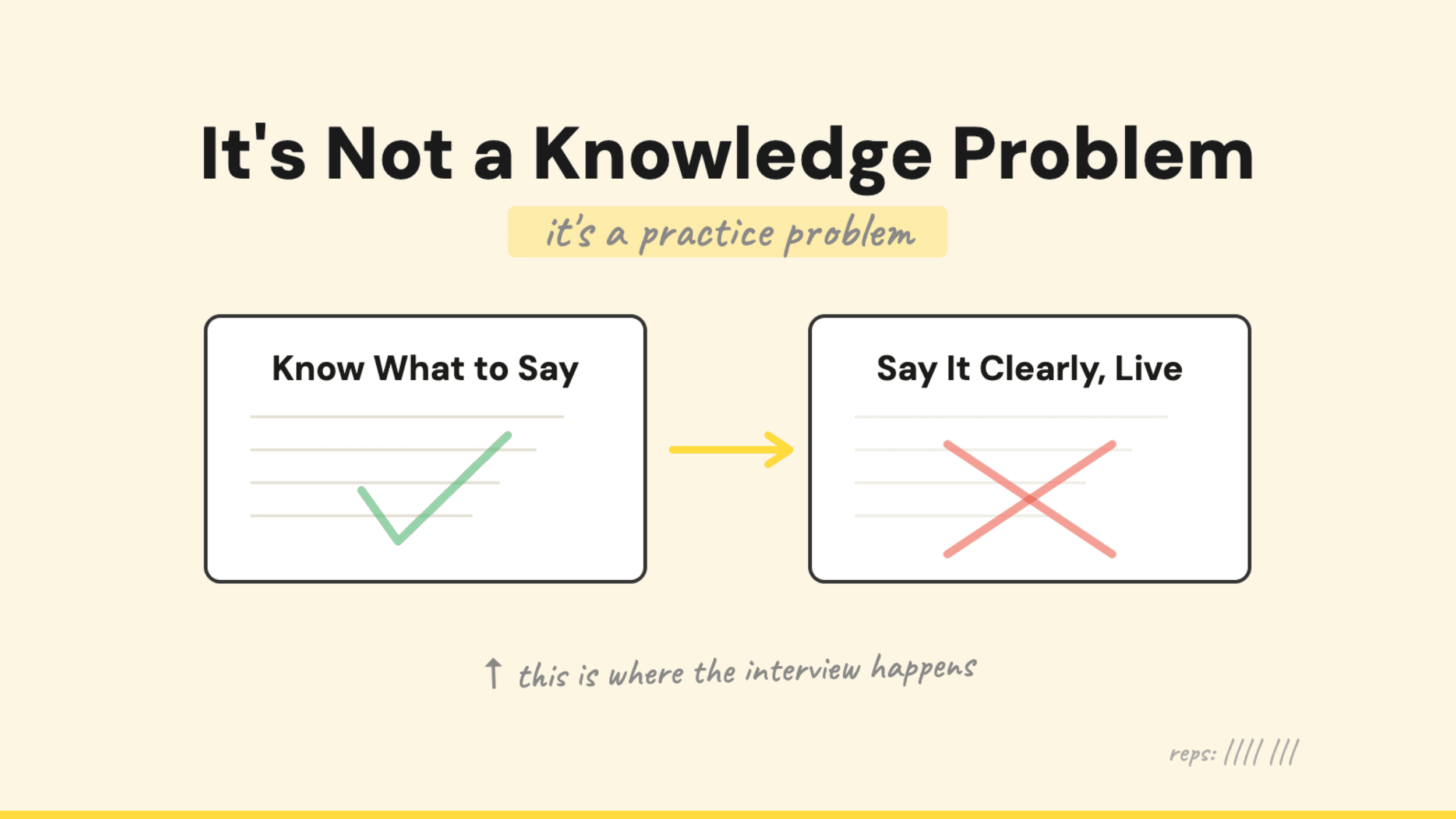

And then there’s the practice problem. You can do a hundred leetcode problems alone at your desk. You can’t practise behavioural answers alone at your desk - or at least, you can’t practise them well. You need to say the words out loud, to someone who will push back, ask follow-ups, and tell you where your answer fell apart. The gap between how a story sounds in your head and how it sounds when you actually say it to another person is enormous. Most engineers discover this gap in the interview itself, which is the worst possible time to discover it.

What actually makes a strong behavioural answer

Before I get to what I built, it’s worth being specific about what interviewers at this level are listening for - because it’s not what most people think.

They’re not looking for a polished performance. They’re looking for evidence of judgment. When this person faced an ambiguous situation with competing priorities and no clear right answer, what did they do? How did they reason about it?

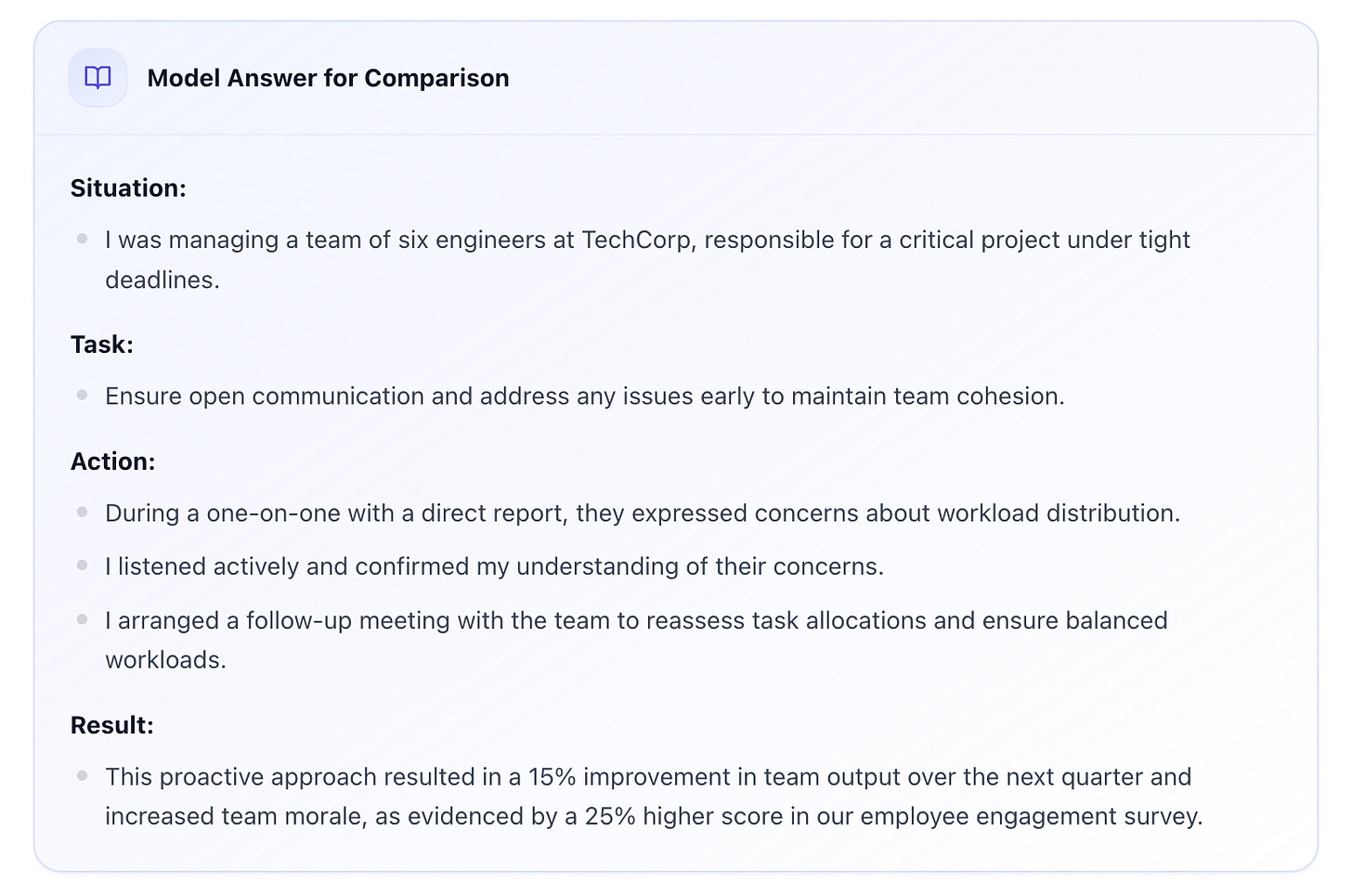

That means the strongest answers share a few qualities. They’re specific - numbers, trade-offs, not “we had a tight deadline” but “we had six weeks to migrate 200,000 users and the product team wanted to ship a new feature in the same window”. They have a clear decision point - a moment where you chose one path over another and can explain why. And they show self-awareness - what you’d do differently, what you missed, what you learned.

Following the STAR framework mechanically isn’t enough. “The situation was X, the task was Y, the action was Z, the result was W” sounds like a book report about your own career. The texture matters - the follow-up probes are where the real evaluation happens, and most engineers haven’t practised going a layer deeper than the surface story.

If you’re enjoying this article, consider subscribing to get:

✉️ Free: 1 original post every Tuesday, my favourite posts of the week every Sunday + 10 Notion Templates for Engineering Managers

🔒 Paid: Full archive + 50+ EM templates & playbooks + The EM Field Guide

So I built something to close this gap

I kept running into this problem. Engineers and managers I managed, mentored, or interviewed - strong technical leaders who couldn’t talk about their own leadership. The fix was always the same: practise out loud, get feedback, iterate. But the options for doing that were limited.

A human interview coach costs £200–700 per session (I am just below that). Friends are helpful but rarely give honest, structured feedback - they don’t want to tell you your answer was weak. And practising in your head doesn’t work because you never discover the gaps until you’re speaking to another person.

So I built a Voice AI Interview Coach specifically for engineering leaders.

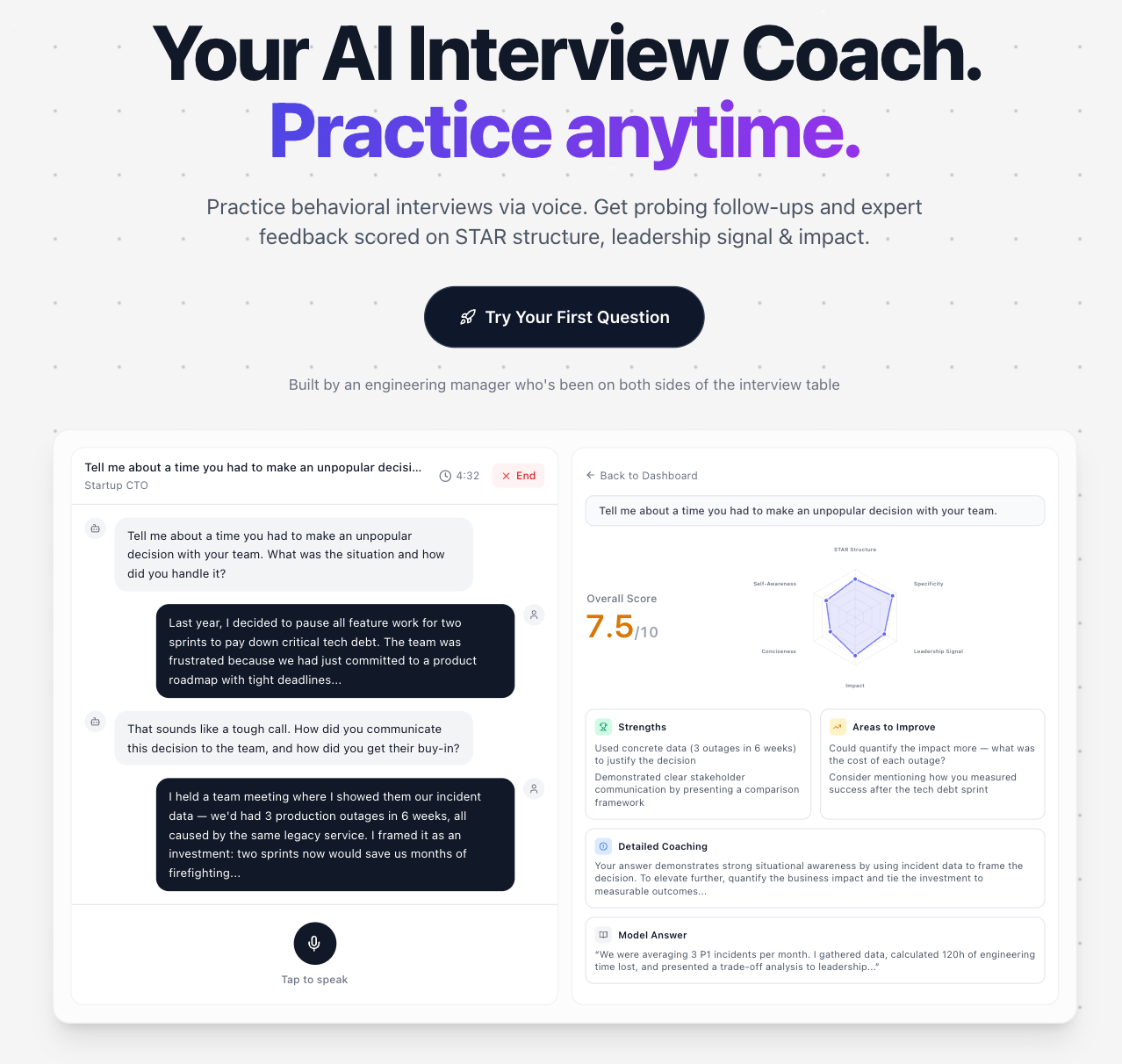

How it works. You pick a behavioural question from a bank of 130+ questions pulled from real interviews at Google, Amazon, Meta, and Stripe. You answer by voice, like in a real interview. The AI doesn’t just listen passively - it follows up with probing questions, exactly the way a strong interviewer would. “What did the other person think about that?” “How did you measure the impact?” “What would you do differently?”

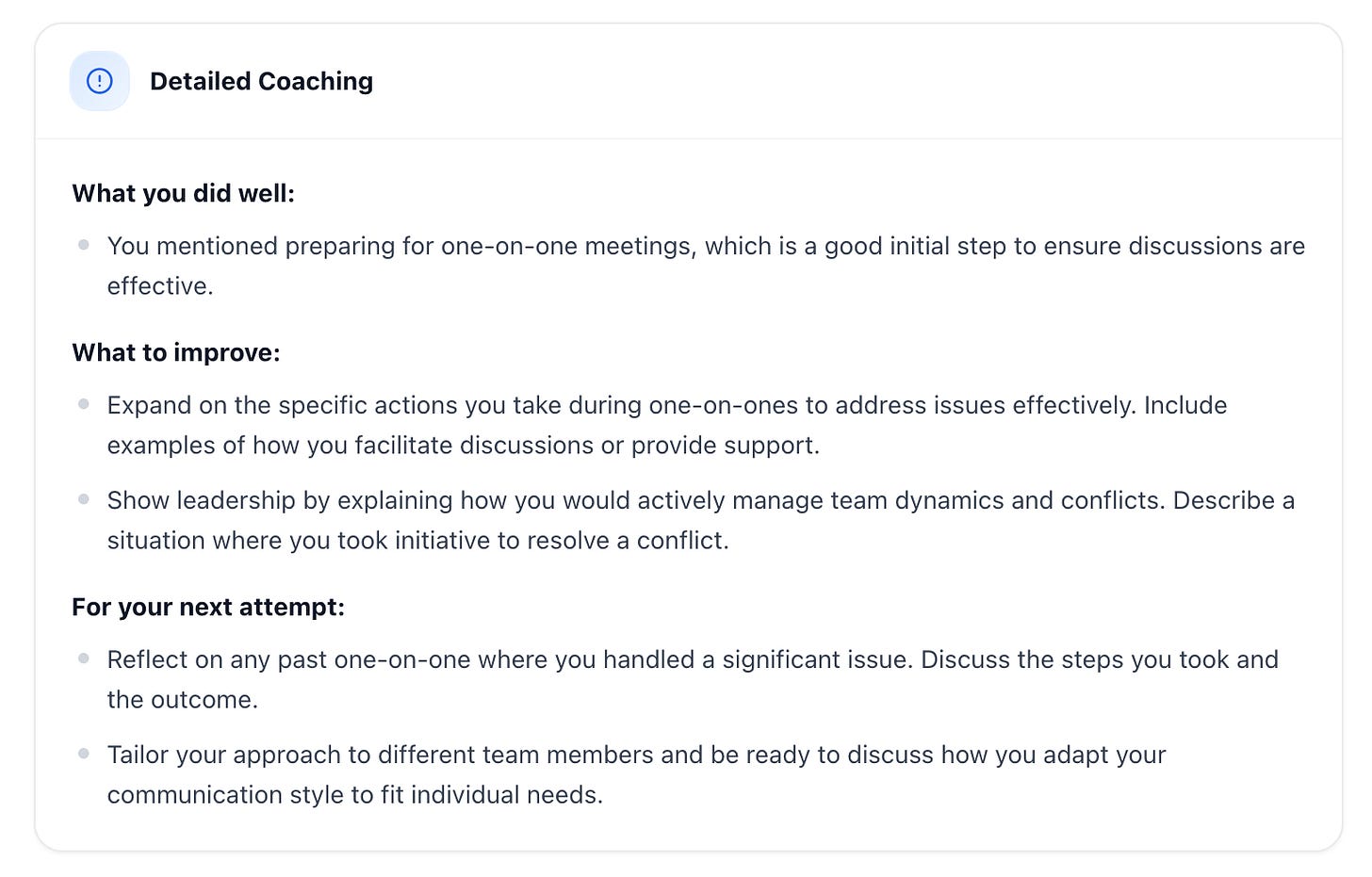

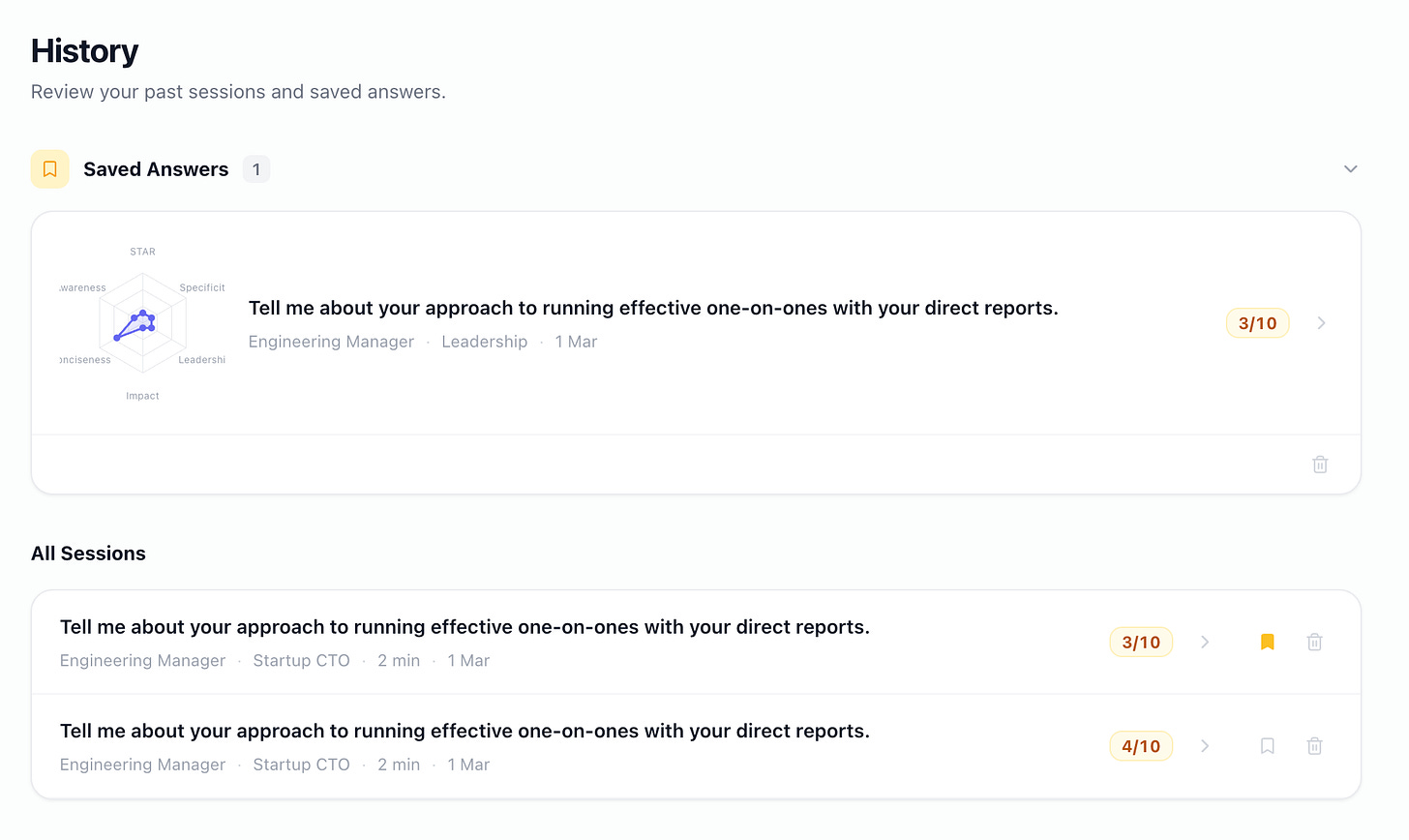

After the conversation, you get a detailed scorecard across six dimensions:

STAR structure

specificity

leadership signal

impact articulation

conciseness

self-awareness

Not a generic “good job” - actual scoring with specific quotes from your answer and concrete suggestions for improvement. Plus a model answer so you can see what a strong response looks like.

The questions are categorised by role (Engineering Manager, Senior EM, Senior Engineer, Staff/Principal) and by competency - leadership, conflict resolution, technical decision-making, stakeholder management, delivery, hiring, the full spread. You can practise the same question as many times as you want. Most people score noticeably better by their third or fourth attempt, which is exactly the point.

ChatGPT won’t get you there

I know what you’re thinking. “I’ll just practice with ChatGPT” - and I’ll tell you now, I’ve tried to do it and it was just not good enough. That’s why I built this product for me in the first place.

Yes, you can talk to ChatGPT and get a spoken response. But there’s a difference between a general-purpose chatbot that can do voice and a tool built specifically for interview practice. A few differences, actually.

First, getting ChatGPT into a mode where it behaves like a competent interviewer takes real prompt engineering. You need to tell it which role you’re interviewing for, what competency to assess, how to probe, when to push back, what scoring criteria to use. You will either spend hours prompting it, or get a very mediocre experience (you’ll be hearing “That’s a great answer!” a lot).

Second - and this was my bigger problem - nothing is saved. You finish a solid answer and although it’s saved in the history chat, it’s difficult to compare your first attempt to your fourth. You can’t review what you said last Tuesday and figure out why that version was stronger. There’s no scorecard, no progression tracking, no way to see whether you’re actually getting better or just getting more comfortable hearing yourself talk.

Third, ChatGPT doesn’t know what good looks like for your specific level. The follow-up questions an interviewer asks a senior engineer are different from the ones they ask an EM or a staff engineer. The evaluation criteria are different. What counts as strong “leadership signal” at L5 versus L7 is different. A general-purpose AI treats all of these the same way.

The Voice AI Interview Coach was built for exactly this problem. You pick from 130+ questions pulled from real interviews at Google, Amazon, Meta, and Stripe - categorised by role level and competency. You answer by voice. The AI follows up the way a trained interviewer would: “What did the other person think about that?” “How did you measure the impact?” “What would you do differently?” Not generic encouragement. Actual probing.

After the conversation, you get a detailed scorecard across six dimensions - with specific quotes from your own answer and concrete suggestions for improvement.

Plus a model answer, so you can see what a strong response actually sounds like at your level.

Every attempt is saved. You can practise the same question five times and watch your scores climb, which tells you something important: the practice format itself was the bottleneck. Now you can practice each question as many times as you want.

(Your first question is free. Try it once and you’ll feel the difference between a chatbot that can talk and a tool that can coach.)

The maths

A single session with a human interview coach costs more than an annual subscription to this tool. For £24.99/month you get unlimited practice across every question, every role level, every competency. Your first question is completely free, no account needed.

If you’ve got an interview coming up, the ROI on a few hours of structured behavioural practice is hard to beat. The difference between a first attempt and a fifth attempt is usually the difference between “not enough signal” and “strong hire”.

I’ve been on both sides of the interview table for years. This is the tool I wish existed when I was preparing for my own leadership roles, and the one I now point engineers toward when they’re getting ready for theirs.

If you enjoy articles like these, you might also like some of my most popular posts:

See you in the next one,

~ Stephane